Behind every turn, stop, and lane change of an autonomous vehicle is a complex system of algorithms learning from millions of driving scenarios. This is the power of Machine Learning in Self-Driving Cars—giving them the ability to interpret their surroundings, anticipate risks, and act with precision in unpredictable environments.

Self-driving cars are no longer experimental concepts reserved for tech labs and billion-dollar prototypes. They are real, road-tested systems powered by massive amounts of data, complex algorithms, and artificial intelligence models that mimic human perception and judgment. But unlike humans, these vehicles don’t rely on instinct—they rely on code.

Machine learning plays a critical role in helping autonomous cars make sense of their environment, anticipate risks, and make safe, split-second decisions. The ability to “learn” from millions of driving scenarios enables these systems to become more precise over time—reducing errors, improving safety, and inching closer to full autonomy.

But how does machine learning actually work in the context of autonomous vehicles? What kinds of data do these cars process, and how do they turn that data into actions like braking, steering, or changing lanes? And more importantly, can we trust these systems with the complexity of real-world roads?

This article breaks down the vital role of machine learning in self-driving cars—from perception to prediction to planning—and explores how AI is redefining the future of mobility.

What Is Machine Learning in Self-Driving Cars and Why It Matters for Autonomous Driving

Machine learning is a branch of Artificial Intelligence (AI) in Automotive Industry that enables systems to identify patterns in data and make decisions without being explicitly programmed. Unlike traditional software—where each rule must be manually coded—machine learning models are trained on vast amounts of data, allowing them to generalize from previous experiences and adapt to new inputs.

In the context of autonomous vehicles, machine learning is not just a convenience—it’s a necessity. The driving environment is dynamic and unpredictable, filled with variables like pedestrians, cyclists, sudden weather changes, and inconsistent road signage. No rule-based system could ever account for every possible scenario. Machine learning, however, gives self-driving cars the ability to learn from real-world examples and continuously improve as they encounter more complex situations.

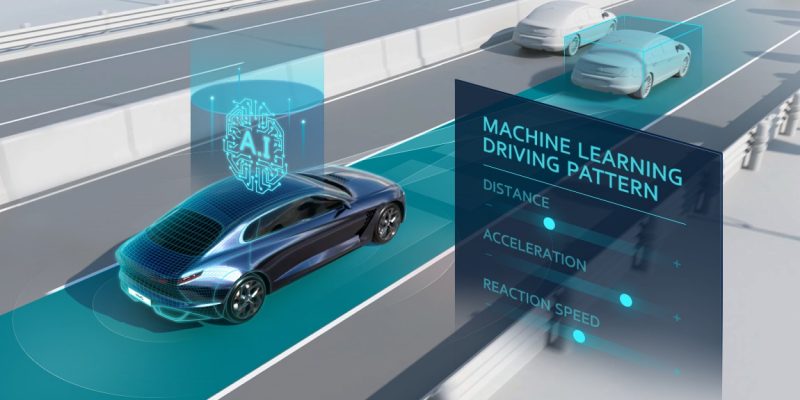

For autonomous systems, machine learning supports three foundational capabilities:

- Perception – Understanding what’s around the vehicle (e.g., recognizing traffic signs, detecting pedestrians).

- Prediction – Anticipating what other road users will do next.

- Decision-making – Choosing how the vehicle should respond in real time.

Each of these layers is supported by different types of algorithms and sensor inputs, all working together to create a real-time interpretation of the world around the vehicle. Without machine learning, self-driving cars would be rigid, unsafe, and unable to function in anything beyond a carefully controlled environment.

In short, machine learning transforms autonomous driving from a scripted task into an adaptive, responsive system—capable of navigating the same messy, chaotic roads we do every day.

Core Functions of Machine Learning in Self-Driving Cars

Machine learning is at the heart of how self-driving cars operate in real time. Unlike traditional driver-assist systems that follow pre-programmed rules, machine learning models are trained to interpret complex, dynamic environments and act accordingly.

In an autonomous vehicle, the decision-making pipeline typically involves three key layers: perception, prediction, and planning/control. Each layer depends on specific machine learning techniques to function effectively.

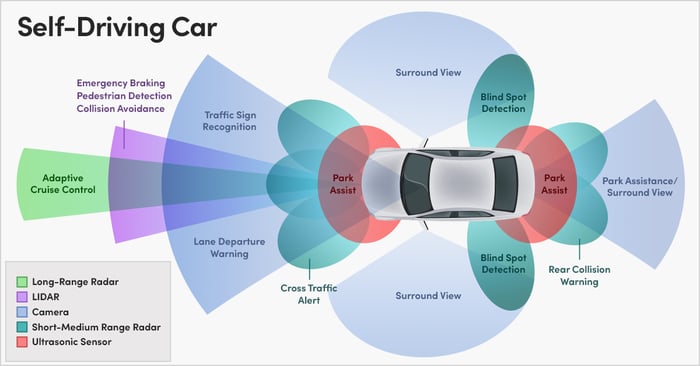

Perception: Understanding the Environment

Perception is the first step. It involves recognizing and interpreting everything around the vehicle—lanes, traffic lights, road signs, pedestrians, and other vehicles. Machine learning enables the system to identify objects using computer vision, LiDAR point clouds, and radar data. Deep learning models like convolutional neural networks (CNNs) are widely used here to classify and detect objects in real time.

Prediction: Anticipating Movement

Once the car knows what surrounds it, it must anticipate what those elements are likely to do next. Will the pedestrian step into the crosswalk? Is the cyclist preparing to turn? Machine learning helps the vehicle predict the behavior of other road users by analyzing patterns and previous trajectories.

Planning and Control: Making Driving Decisions

Planning refers to determining the safest and most efficient course of action based on the perception and prediction layers. Should the car accelerate, brake, change lanes, or slow down? These decisions are made within milliseconds using ML models trained on millions of driving scenarios.

Table: How Machine Learning Powers Each Layer of Autonomous Driving

| Function | Purpose | Key ML Techniques Used | Real-World Example |

|---|---|---|---|

| Perception | Recognize and interpret the vehicle’s surroundings | CNNs, semantic segmentation, object detection | Detecting a pedestrian at night using infrared camera and radar |

| Prediction | Forecast future behavior of nearby objects | Time-series models, behavioral cloning | Predicting a cyclist’s sudden lane change |

| Planning | Decide on the vehicle’s next action | Reinforcement learning, decision trees | Smoothly merging into highway traffic |

This layered architecture is what gives autonomous vehicles the ability to mimic human-like reasoning while benefiting from the speed and precision of machine-based computation.

Types of Machine Learning Used in Self-Driving Cars

Self-driving cars rely on a variety of machine learning approaches to handle the complexity of real-world driving. Each type of machine learning plays a distinct role depending on the task—whether it’s recognizing objects, predicting outcomes, or learning optimal driving behaviors through experience.

Understanding the types of machine learning used in autonomous driving helps clarify how these vehicles evolve and improve over time.

Supervised Learning

This is the most common form of machine learning used in self-driving systems. In supervised learning, models are trained on labeled datasets—where each input (like a road image) has a known output (e.g., “traffic light,” “pedestrian,” “lane marker”).

These models learn to associate patterns in data with specific outcomes. Supervised learning is used heavily in perception tasks, such as:

- Image classification (e.g., stop signs, pedestrians)

- Lane detection

- Road type identification

Unsupervised Learning

Unlike supervised learning, unsupervised learning does not rely on labeled data. Instead, it seeks patterns and relationships within the data itself.

This technique is useful for:

- Clustering driving scenarios or behaviors

- Understanding traffic flow patterns

- Grouping similar objects without pre-defined categories

In self-driving applications, unsupervised learning helps detect anomalies, group similar road situations, and improve generalization across unfamiliar environments.

Reinforcement Learning

Reinforcement learning (RL) enables an AI system to learn by trial and error. In a driving simulator, an RL agent is given rewards or penalties based on its actions, such as staying in lane or avoiding collisions. Over time, the system learns strategies that maximize long-term safety and efficiency.

RL is often used in:

- Strategic planning (e.g., roundabout negotiation)

- Complex control scenarios (e.g., smooth merging or overtaking)

- Simulated training before real-world deployment

Deep Learning and Neural Networks

Deep learning is a subset of machine learning that uses artificial neural networks with many layers. These models excel at handling complex, high-dimensional data like camera images, LiDAR point clouds, and audio signals.

In autonomous vehicles, deep learning is central to:

- Object detection and segmentation

- Scene understanding

- Real-time sensor fusion

- Lane tracking in poor visibility

Some common neural network architectures used in AVs include:

- CNNs (Convolutional Neural Networks): Ideal for image recognition and object detection

- RNNs (Recurrent Neural Networks): Useful for sequential data like traffic flow prediction or behavior modeling

- Transformers: Increasingly used for end-to-end driving models due to their ability to capture long-range dependencies in data

Data Is the Fuel: Training and Simulation in Autonomous Systems

In the world of machine learning, data is everything. For self-driving cars, the quality and quantity of data used during training can determine how well the vehicle performs in real-world scenarios. Every decision made by an autonomous vehicle—whether it’s recognizing a stop sign or merging into highway traffic—is based on what the system has learned from past data.

Data Collection at Scale

Autonomous vehicles collect enormous amounts of raw data from their sensors every second. This includes:

- High-resolution video from cameras

- 3D spatial data from LiDAR Technology

- Velocity and object detection from radar

- GPS and IMU (Inertial Measurement Unit) signals

- Environmental conditions (rain, snow, fog, etc.)

All this sensor fusion data is stored, labeled, and used to train machine learning models that replicate human-like perception and decision-making. Labeling this data—such as drawing bounding boxes around pedestrians or identifying road signs—is a massive undertaking often assisted by AI-powered annotation tools and human validators.

Simulation Environments

Real-world testing is essential, but it’s not always practical or safe to expose a vehicle to every possible edge case—such as a child darting into the street or an unexpected road closure. That’s where simulation environments come in.

Virtual testing platforms allow self-driving systems to:

- Recreate complex traffic scenarios

- Train reinforcement learning agents safely

- Fine-tune models using synthetic data

- Stress-test vehicle responses in edge cases (e.g., foggy nights, sudden tire blowouts)

Simulation can generate billions of miles of driving data in far less time and cost than physical testing. Companies like Waymo, Tesla, and Cruise heavily rely on simulated environments to supplement their real-world data.

Data Feedback Loop

One of the most powerful features of machine learning in autonomous vehicles is the feedback loop. Every time a self-driving car drives, it generates more data, which is then analyzed to refine the model. Over time, this creates a virtuous cycle:

- Real-world driving data is collected.

- Edge cases and system errors are identified.

- Machine learning models are updated and retrained.

- The updated system performs better in the same or similar scenarios.

This iterative process is what enables these vehicles to get safer, smarter, and more reliable with each trip.

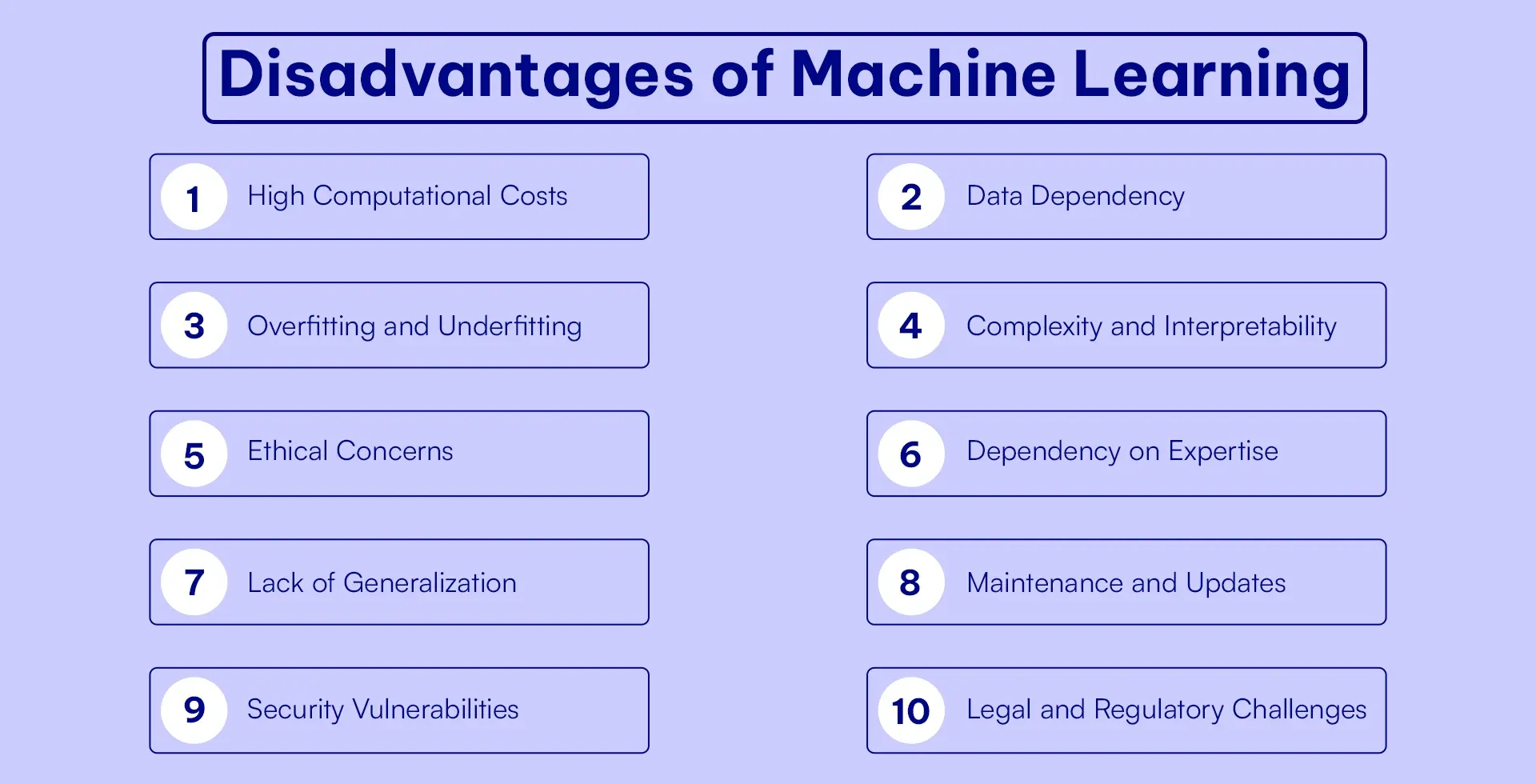

Challenges and Limitations of Machine Learning in AVs

While machine learning has revolutionized autonomous driving, it is far from perfect. Self-driving systems still face significant challenges that must be addressed before they can be fully trusted to operate without human oversight. These limitations are not just technical—they also touch on safety, ethics, regulation, and public acceptance.

Edge Cases and the Long Tail Problem

Autonomous systems struggle with rare or unpredictable scenarios—often referred to as edge cases. These include:

- Unusual pedestrian behavior (e.g., someone in a costume crossing the road)

- Debris on the highway

- Temporary construction zones with no signage

- Erratic drivers or vehicles violating traffic rules

Even with billions of miles of training data, some situations are too rare or complex for the machine learning model to handle reliably. This is known as the long tail problem—where the edge cases, although individually rare, collectively occur frequently enough to matter.

Lack of Generalization

Machine learning models can be incredibly powerful—but only within the context of the data they’ve been trained on. If a model has only learned to drive in sunny California, it may fail in snowy conditions or on unfamiliar road types.

This lack of generalization is a major hurdle. Unlike humans, who can apply common sense and abstract reasoning to new situations, AI systems often require additional training data and fine-tuning to adapt.

Transparency and Explainability

Deep learning models used in autonomous systems—especially those involving neural networks—are often seen as “black boxes.” They can make decisions that are difficult to interpret or explain, even by the engineers who built them.

This raises critical concerns:

- How do we audit an AV’s decision after an accident?

- Can the system explain why it chose to swerve or brake?

- What level of accountability can we assign to a machine?

Explainability is crucial for regulatory approval, public trust, and ethical transparency in autonomous systems.

Data Bias and Ethical Dilemmas

Machine learning models are only as good as the data they’re trained on. If the training data is biased—due to overrepresentation of certain geographies, demographics, or behaviors—the resulting system can inherit those biases.

Ethical dilemmas also arise in decision-making. For example:

- Should the vehicle prioritize the safety of passengers over pedestrians?

- How does it choose between two harmful outcomes in a split-second scenario?

These questions challenge not just engineers, but policymakers and society at large.

The Future of Machine Learning in Autonomous Driving

Machine learning continues to be the driving force behind advancements in autonomous vehicle technology. As the field evolves, we are witnessing rapid progress in both hardware capabilities and algorithmic sophistication. The path to full autonomy may be complex, but the momentum is undeniable.

Improved Real-Time Performance

Next-generation machine learning models are being designed to process vast streams of sensor data with ultra-low latency. With the help of specialized AI chips and edge computing units, future self-driving cars will be able to react even faster and more accurately to changing environments—approaching or even surpassing human-level response times.

Transfer Learning and Federated Learning

New training techniques like transfer learning—where a model trained on one domain can adapt to a new task—can significantly reduce the need for massive datasets. Federated learning allows AVs to share knowledge across a distributed fleet without transferring raw data, enhancing learning efficiency and protecting user privacy.

These advancements promise to make machine learning in autonomous systems faster, safer, and more scalable across different regions and manufacturers.

Collaboration Between AI and Human Drivers

Before full Level 5 autonomy becomes mainstream, many experts foresee a prolonged phase of collaborative autonomy, where machine learning augments—rather than replaces—human drivers. These systems will act as intelligent copilots, anticipating risks, providing real-time suggestions, and intervening only when necessary.

This phased approach not only improves safety but also builds public trust, offering a smoother transition toward fully autonomous transportation.

Regulatory and Societal Readiness

Even the most advanced machine learning system won’t succeed without regulatory frameworks, infrastructure upgrades, and societal acceptance. Governments and automakers must work together to develop standards for safety validation, liability, and ethical governance.

Ultimately, machine learning is just one part of a much larger ecosystem that must evolve in harmony.

Conclusion

Machine learning is the brain behind the wheel of modern autonomous vehicles. From sensing the environment and predicting traffic behavior to making complex driving decisions, it plays a central role in every aspect of self-driving technology. While challenges remain—especially around edge cases, bias, and explainability—advancements in data, simulation, and model design are accelerating the road to autonomy.

As machine learning matures, the promise of safer, smarter, and more accessible transportation becomes not just a vision of the future—but an emerging reality.

FAQ About Machine Learning in Self-Driving Cars

How is machine learning used in self-driving cars?

Machine learning is used in self-driving cars to analyze sensor data, recognize objects, predict traffic behavior, and make driving decisions. It powers core functions like lane detection, obstacle avoidance, and path planning.

What types of machine learning are used in autonomous vehicles?

Self-driving cars use several types of machine learning, including:

Supervised learning for object recognition

Unsupervised learning for pattern discovery

Reinforcement learning for decision-making

Deep learning for real-time perception and sensor fusion

Why is machine learning important for autonomous driving?

Machine learning enables vehicles to learn from experience, adapt to dynamic environments, and improve over time. Without it, autonomous cars would not be able to handle the complexity and unpredictability of real-world roads.

What are the challenges of using AI in self-driving cars?

Key challenges include:

Handling rare or unexpected scenarios (edge cases)

Ensuring safety in unfamiliar environments

Explaining AI decisions (transparency)

Preventing data bias and ethical dilemmas

How do autonomous vehicles get training data?

Training data is collected from real-world driving, simulations, and synthetic environments. It includes camera footage, LiDAR point clouds, radar data, GPS signals, and more—often labeled by AI-assisted tools and human reviewers.